Beyond the Virtual Agent: Engineering True Physical AI for the Real World

Madhu Gaganam

2/22/20263 min read

We are living through an explosion of digital capabilities. Frameworks that give language models the ability to act, routing commands, calling APIs, and executing digital workflows, have shifted the paradigm of how we interact with software. It is a massive leap forward for the virtual world.

As we look to bring this intelligence into physical spaces, a natural assumption emerges: we can simply take these virtual, asynchronous agents, put them inside a robot chassis, and achieve true Physical AI. But treating a factory floor like a digital workspace is a fundamental engineering error.

The physical world does not operate just on API calls; it operates on physics. When we cross the boundary from the virtual to the industrial, the rules of engagement change entirely. Here is why the current narrative around agents everywhere falls apart on the factory floor, and what true Physical AI actually requires.

The Illusion of Speed: The 800 Millisecond Catastrophe

Virtual agents are built for asynchronous cognitive tasks. The architecture of routing a command, processing it through a probabilistic text model, and executing a tool inherently creates latency. Moving that agent from a remote server to a local on premise GPU does not solve the physics problem. The latency is built into the architecture. In the digital world, waiting 800 milliseconds for a file to parse is a seamless experience.

In a manufacturing environment, 800 milliseconds is a catastrophe.

Consider the physics: a typical industrial robot operates at speeds of roughly 1.5 meters per second. If an unexpected anomaly occurs, such as a worker stepping into a collaborative zone or a tool slipping, an 800 millisecond cognitive delay means that robotic arm will travel 1.2 meters (nearly 4 feet) completely blind before the system even generates the command to brake. On a tightly packed factory floor, that guarantees a severe collision.

Industrial robotics demand deterministic guarantees. The lower level motor controls and safety interlocks, the machine’s muscle memory, must operate in the sub 10ms range. Therefore, any higher level cognitive system reacting to the environment must complete its entire sensor to action reflex arc in sub 50ms. Virtual, asynchronous agents simply cannot survive this physical reality.

Beyond Actuation: Sense and Adapt

The prevailing tech narrative mistakenly equates Physical AI with putting a brain in a jar on top of a machine. It treats actuation as if it were a simple database write operation, a discrete command sent to a motor.

True Physical AI is far more complex than routing text commands. It is deeply rooted in continuous multimodal sensor fusion.

When an industrial system interacts with the physical world, it must process high bandwidth streams of data. It requires haptic feedback, torque sensing, and continuous spatial awareness. It requires the ability to sense and adapt. Virtual agents are effectively numb, processing only discrete, static data points. Physical AI must be powered by Edge AI, processing massive sensory streams locally and instantaneously to adapt to physical nuances.

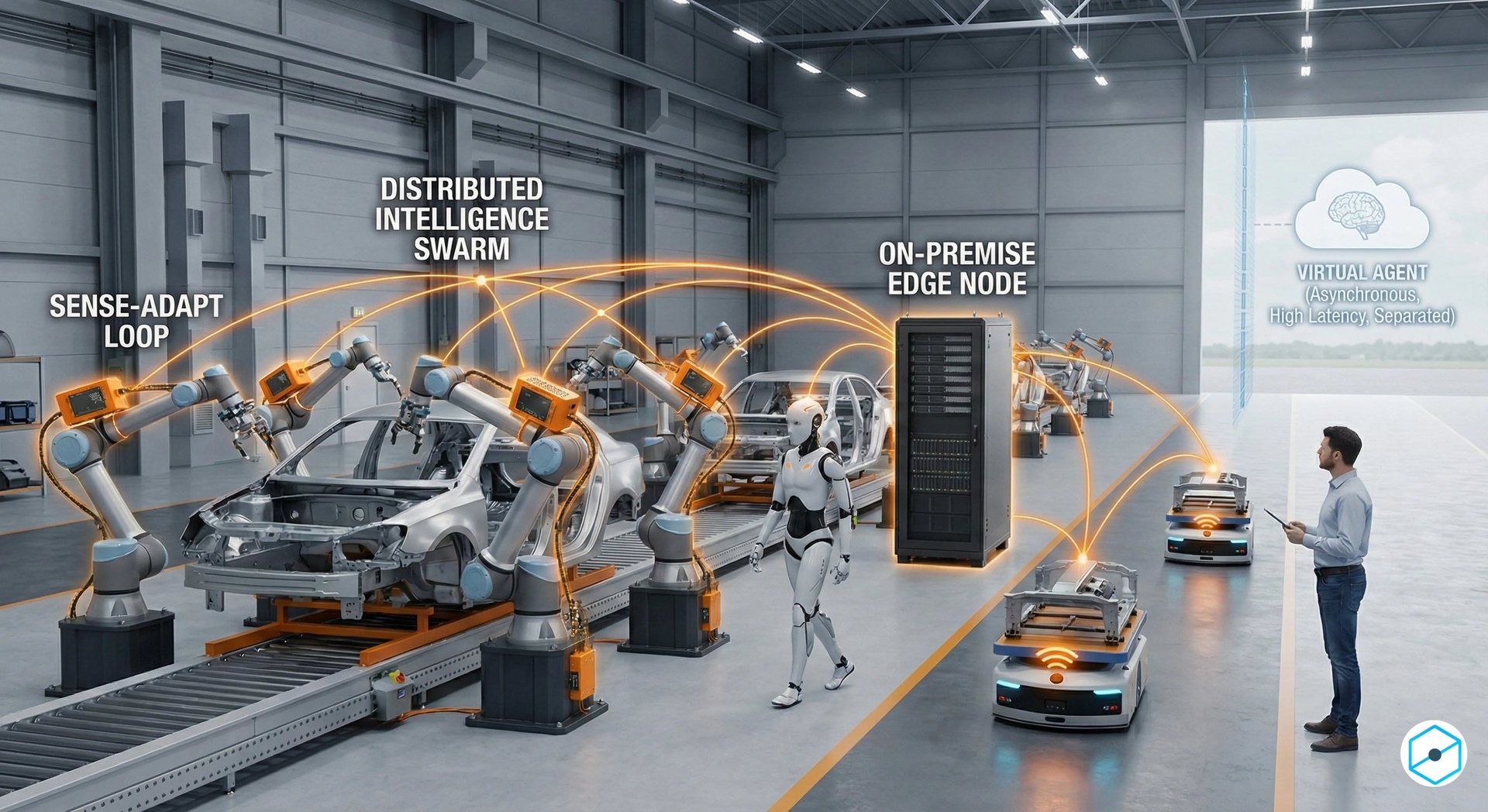

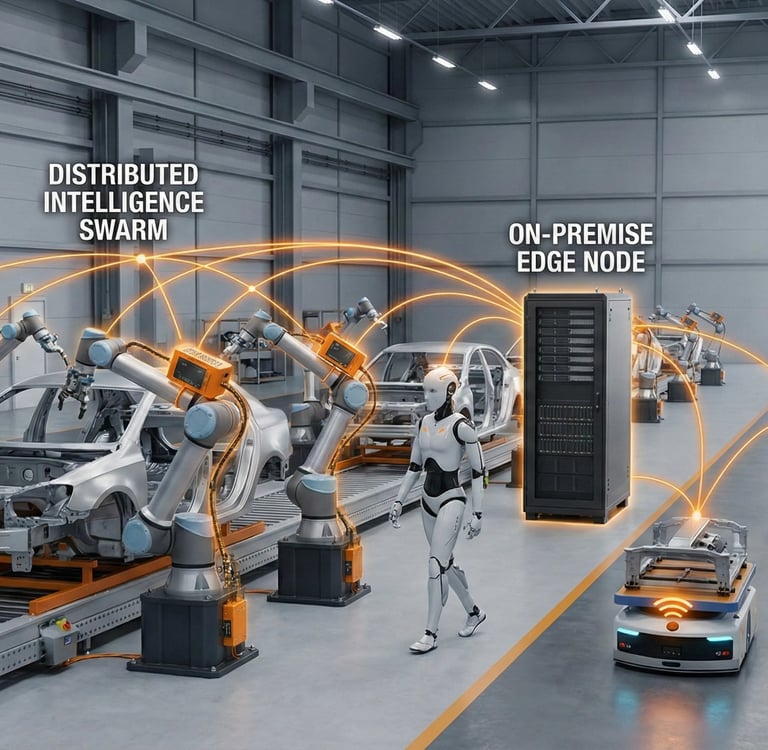

Separation of Concerns: Swarm Syncing and Security

There is immense value in the architectural concepts virtual agents use, such as strict identity management and defined workspaces. In fact, this is precisely how industrial environments must be structured, but with much harder, physical boundaries.

We must draw an ironclad line between the personal agents that support office workflows and the deterministic systems that support operations on the factory floor. They should remain strictly separated, interacting only across highly secure, verifiable digital twin interfaces.

On the factory floor, intelligence must be distributed. Swarm syncing between machines and seamless collaboration with humans require local edge computing. This can be facilitated by a powerful on premise edge node that acts as a local conductor, coordinating the swarm’s collective intelligence and sharing critical data without the latency or security risk of relying on a centralized virtual agent. If a cognitive layer is deciding on a broad operational strategy, that intelligence cannot have direct, unbounded access to physical actuators. The cognitive layer and the execution layer must remain fiercely separated by hardware level safety interlocks that cannot be overridden by software logic.

The Path Forward is Edge AI

We cannot software engineer our way out of the laws of physics. Physical AI is not about running a generalized agent on a robot to see what it can do. It is about deploying specialized Edge AI that dynamically adapts to its environment, respects the limitations of hardware, and guarantees human safety through deterministic control.

The virtual agents of today are brilliant tools for the digital workspace. But when it comes to the factory floor, we need systems built from the ground up for the realities of steel, gravity, and motion.